Disney MoviesAnywhere:

Optimizing Power-User Workflows by 2,300%

How I adapted the 5-Day Design Sprint to navigate stakeholder "Calendar Tetris" and save 20,000+ person-hours.

Figure 1: Hero image for this case study showing the QC Spot Check Video and Image Review UIs, plus the Report screen used by producers to investigate issues as they are flagged.

Existing QC Tester Process

To conduct a quality control test on a given movie title, the QC Tester went through a process that entailed the following:

Manually browsing for and pull asset files consisting of HLS/Dash versions of the feature and its trailers, images, chapter information, etc.;

Validate they have the latest versions for each file;

Open and manually scrub through each of the video files;

Open and manually copy/paste/align images into Adobe Photoshop, using pre-defined templates that allowed them to pass/fail images based on size constraints in the interface; and

Documenting their findings at each step in a shared spreadsheet.

High-Level Issues

Task Switching and High Cognitive Load

This existing process was painstakingly-tedious and required switching between multiple browser tabs, windows, and other applications—a task switching nightmare and massive cognitive load that’s required both for managing where in the process a given title is, and also the manual steps within each task to complete it.

3-4 Hours Per Title

On average, a QC Tester would spend an average of at least three to four hours to review ONE movie title and its accompanying assets, and that’s IF they didn’t encounter any missing or out-of-date files. When they hit a snag with one title, resolving the issue wasn’t instant, so they would start reviewing another movie and come back to it after they got answers.

Data Normalization and Subjectivity Issues

Potential for mistakes and issues with data normalization, plus criteria for passing/failing different types of assets was often subjective.

Accurate and Timely Reporting impossible

Documenting issues, tracking statuses, and cataloging metadata spanned multiple applications, documents, and servers; aggregating these data into accurate reports in a timely manner was practically impossible.

Context

MoviesAnywhere.com is “… a partnership (initially with 20th Century Fox, Sony Pictures, Universal Pictures, and Warner Bros; later more were added) created by Disney Entertainment as a means through which consumers would access and enjoy movies on multiple platforms.” (Wikipedia).

A single movie title is comprised of many component parts: HLS/Dash video files, trailers, images, chapter information, and all of the metadata for each of these. For Disney Movies Anywhere—what already existed and would evolve to become MoviesAnywhere.com—Disney Studios Technology employed a team of Cast Members whose sole job was to manually locate, aggregate, review and document issues for movies already available for subscribers to view online across multiple platforms.

For MoviesAnywhere.com, 7,500+ movie titles needed to go through this QC process. With partner studios added to the mix, the manual workflow and processes were NOT going to scale successfully, nor would they allow for effective communication between studio producers. Leadership decided that backoffice workflows and custom interfaces would need to be created and developed to ensure the teams in place would have tools to achieve this massive undertaking before launch.

That’s where I came in.

Approach

Research and Learning

5-Day Design Sprint Workshop for MVP Concept/Basic Prototype

Prototype Iteration

Usability Testing

Designing and Fleshing Out All Use Cases

Ongoing Reviews & Acceptance with QC, Producers, Engineers, Stakeholders, and Executives

Ongoing Dev Team Support

5-Day Design Sprint Workshop

Disney usually runs at a cadence of two-week Agile sprints, where design work is already completed before a sprint begins, and the designer (me) has already negotiated scope, requirements, and provided assets for the respective work before it starts. With this kind of challenge required me to think differently about how to solve this challenge.

I decided to conduct a 5-Day Design Sprint workshop to kick off the Design phase after completing the bulk of my research. The book, authored by Jake Knapp, is an incredibly efficient way to go from challenge to prototype in just five days. He provides a 90-second overview of the process in a YouTube video (below).

Research

I needed to learn about existing processes, tasks, documentation methods, friction points, perspectives, business goals, backend technologies being implemented, and much more. To do this, I conducted contextual inquiries and screen-recorded observational working sessions with Quality Control Testers, their managers, Producers, Engineers, Stakeholders, and Executives: 20 people total, with the counts per role as follows:

Quality Control Testers (6)

Quality Control Managers (2)

Producers (4)

Engineers (5)

Stakeholders & Executives (3)

The Process Pivot

It’s critical to conduct a workshop like this with participants involved for a full 40-hour week, Monday through Friday. Schedule conflicts (“Calendar Tetris”) would make this impossible unless I scheduled it months in advance—not a viable option. In this case, I ran a lean version of the workshop in a dedicated conference room I booked for the week.

The Challenge: High Stakes, Zero Alignment

The Goal: Clear a 7,500-title backlog before launch.

The Problem: The existing QC process took four hours per title.

The Bottleneck: Even the 40-hour Design Sprint was impossible because stakeholders (Founders, Leads, Engineers) couldn’t clear their calendars simultaneously.

The Solution

Rather than abandoning the rigor of a sprint, I acted as a designer-manager hybrid to drive the work forward:

Days 1 & 2 (Asynchronous Alignment): I independently handled initial goals, use-case mappings, and sketching design concepts. Instead of having stakeholders there for a full eight hours each days, I pulled them in (during breaks in their calendars) for brief checkpoints lasting between five and 30 minutes. I would then continue and iterate based on feedback and new insights as they surfaced.

Day 3 (Storyboarding): Once a direction was validated, I spent an entire day sketching storyboards that would be prototyped the next day.

Day 4 (Prototyping): For a power-user tool where speed is the primary goal, static boxes don’t suffice. I took storyboards from Day 3 and built them out with greater detail in Axure, with callouts for specific interactions/microinteractions that would be more challenging and need further exploration later.

Day 5 (Testing & Validation): Presented the prototype to lead stakeholders and had QC Testers sit down and do run-throughs of the various use cases. This vision hit home with the entire team, and I was given the blessing to move the project forward.

In the weeks that followed, I collaborated with Patricia Bellantoni (an incredibly-talented UX colleague and mentor of mine at Disney) to:

Refine the over-arching flow where necessary to allow for edge cases;

Detail out steps with greater specificity;

Build an interactive prototype that included necessary nuanced micro-interactions; and

Conduct additional usability tests with the same QC Testers s who would potentially be future users.

Further, a QC Report made available pass/fail and qualitative notes in real-time after a movie title was tested. This allowed the internal MoviesAnywhere Producers to communicate more effectively with counterparts at partner studios to quickly resolve errors with assets delivered for ingestion.

Why I Skipped Wireframes

In this context, the user’s primary pain point was "time-to-resolution." You cannot test the efficiency of a keyboard-driven, high-speed workflow with a static wireframe. By moving directly to prototyping, I was able to test the actual feel and speed of the interface, which was a key metric that mattered.

The Result (“Hard” Metrics)

2,300% Increase in Efficiency: Reduced review time from 4 hours to 10 minutes per title.

20,000+ Person-Hours Saved: Enabled a small team to clear a massive multi-year backlog in just two months.

Technical Handoff: Provided a detailed specification and functional prototype that minimized engineering friction during the build phase.

Screenshots & Walkthroughs from Final Prototypes

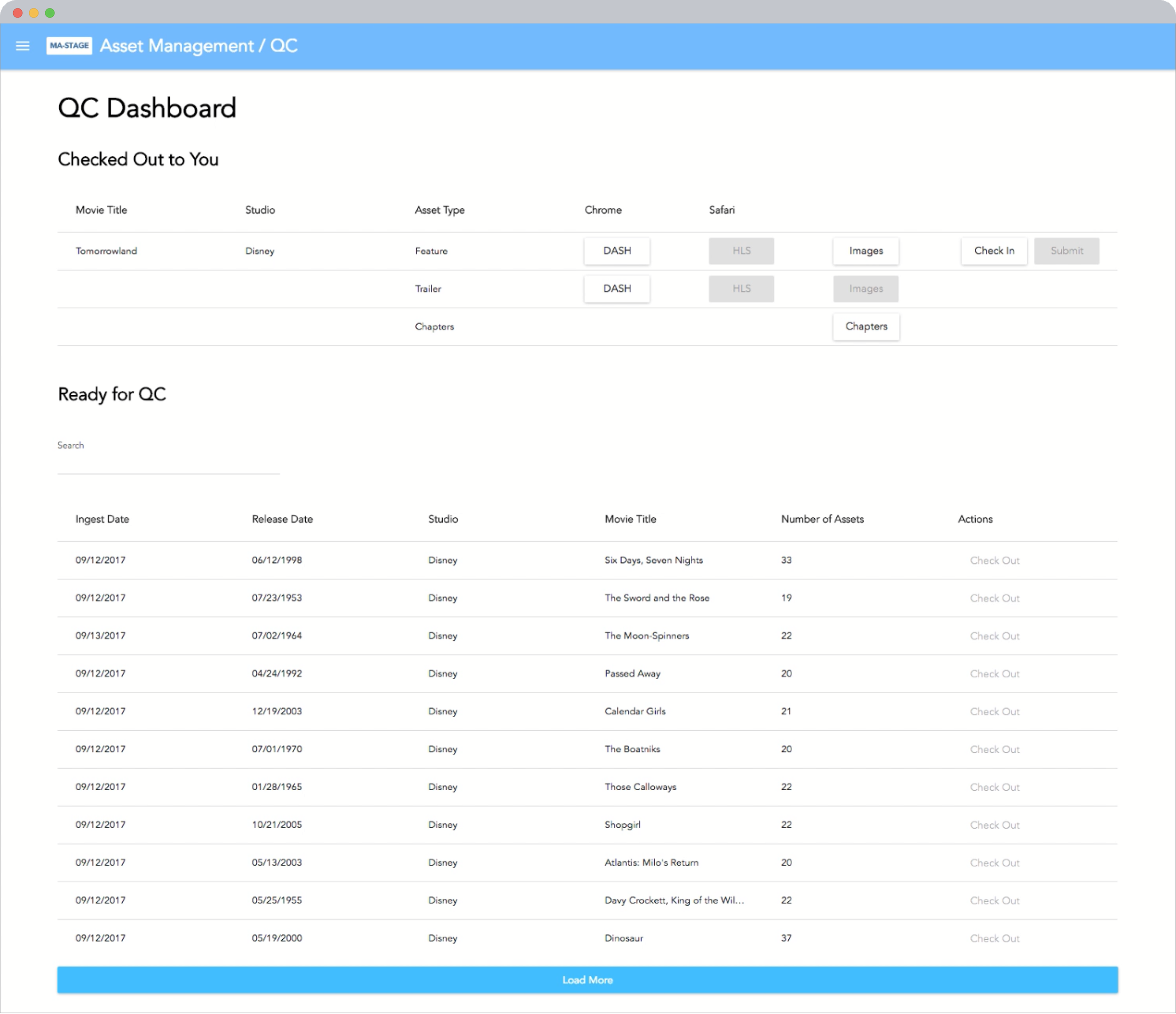

Figure 2. QC Dashboard is an automatic queue from which the user can check-out/-in a movie title that’s ready for testing.

Figure 3. System presents the Trailer and Feature video files (in HLS and Dash formats) sequentially. When reviewing the user can click buttons corresponding to an A/V issue when encountered; doing so automatically records the time and issue type, and a notes field allows for more qualitative details.

CTAs on each step are dynamically labeled based on indicated errors, with some classified as severe enough to warrant an immediate fail and flagging of that title for follow-up by MoviesAnywhere Producers.

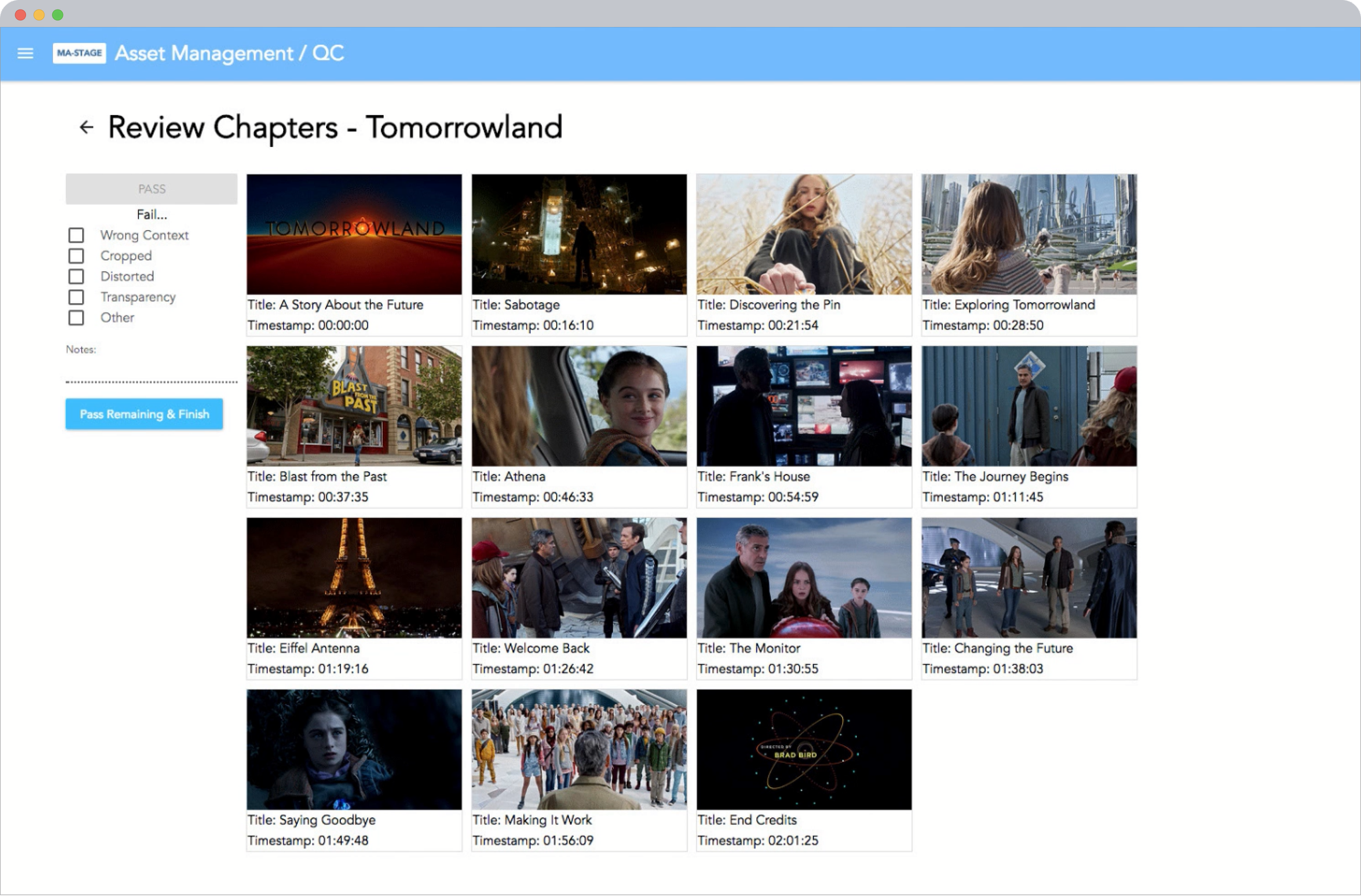

Figure 4. Chapters and their corresponding thumbnails are checked for accuracy, context and thumbnail quality.

Figure 5. Key art and title screens are presented in each of the required aspect ratios, with overlays indicating safe zone boundaries (e.g., if a character’s face would be cut-off or partly obscured) and other issues quickly taggable.

QC Report

The net result of reviews being conducted by QC Testers is the QC Report that’s used by MoviesAnywhere Producers to resolve title asset issues that are made available in real-time.